Nvidia’s decision to invest $2 billion each in photonics makers Lumentum and Coherent is not just another artificial intelligence headline. It is a sign that the next bottleneck in AI is increasingly about moving data, not just crunching it.

If Nvidia can push more traffic through its data centers with fewer watts and less heat, that can translate into lower emissions and less water stress in the places where those servers sit.

The flip side is that faster networks can also accelerate the buildout of “AI factories,” which is already stretching grids and cooling systems across the U.S. and beyond.

Photonics shows up where AI really hurts in networking

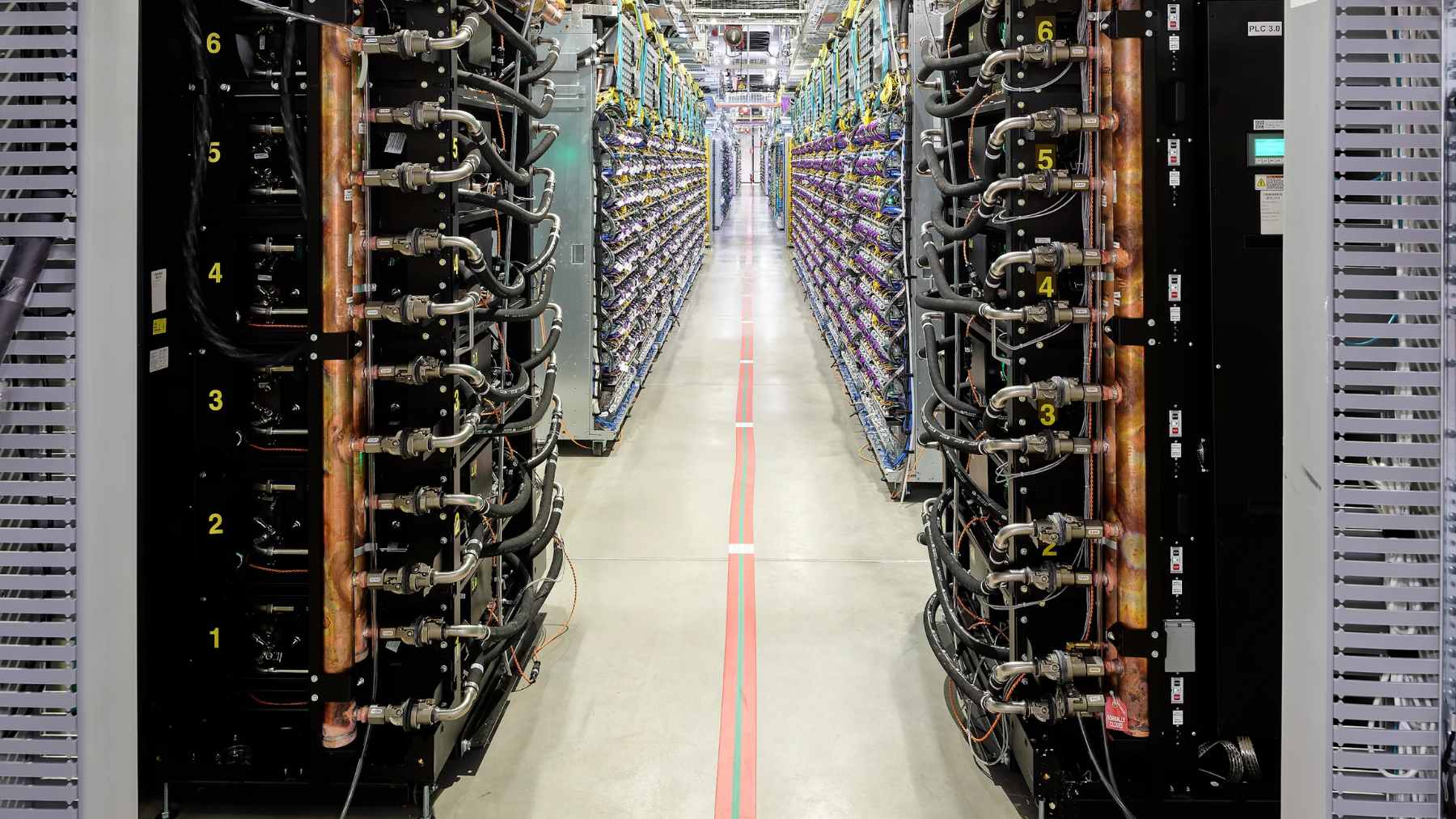

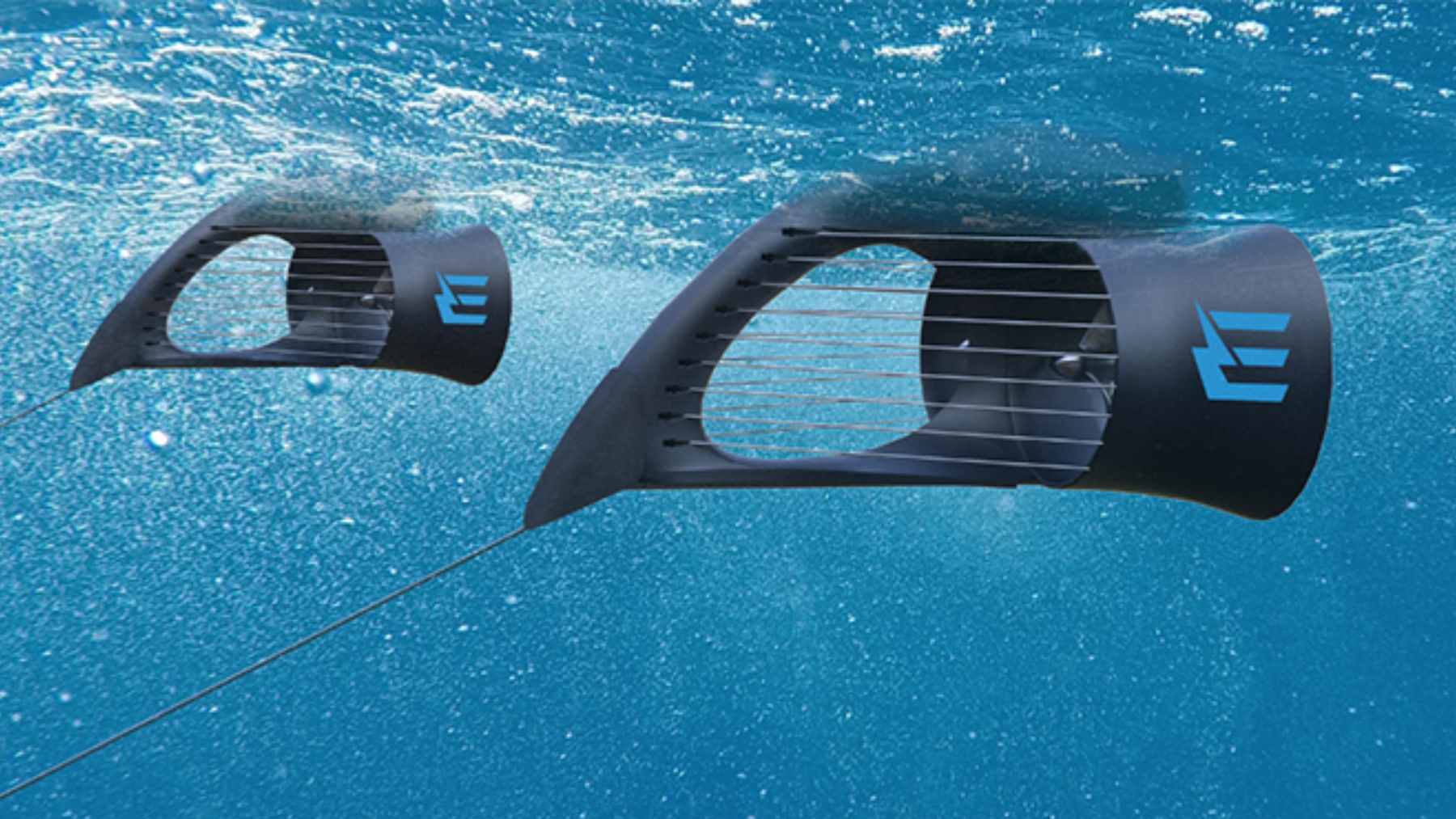

AI clusters are not just hungry for computing power, they are hungry for data movement. Photonics uses light instead of electrical signals to move information between chips and racks, which can cut latency and reduce energy lost as heat in copper interconnects.

Nvidia says the tie-ups with Lumentum and Coherent include multibillion-dollar purchase commitments and access rights for advanced lasers and optical networking products. In practical terms, that is about securing supply for silicon photonics and “co-packaged optics,” where optics sits closer to the computation package instead of at the edge of the rack.

The physics matters because the faster the model, the more often it has to move data across the network. A recent Nature Electronics paper reported below a picojoule per bit in a silicon photonics transmitter, a sign of what is possible at the component level.

The hidden climate cost of electricity, water, and heat

Most people notice AI in apps. Utilities notice it in peak demand forecasts, which can feel a lot less abstract when the summer heat sticks around and the grid is already strained.

The International Energy Agency projects that electricity demand from data centres worldwide is set to more than double by 2030 to around 945 terawatt-hours, and it points to AI as the biggest driver of that increase. That single number helps explain why chip performance and power efficiency are now part of the same conversation.

In the U.S., the pressure is showing up in regional grid markets. Pew Research Center pointed to PJM Interconnection’s capacity-market price spike and estimated a $9.3 billion price increase for the June 2025 to May 2026 period, with some residential bills projected to rise by about $18 a month in western Maryland and $16 a month in Ohio.

Cooling is the quiet environmental fight

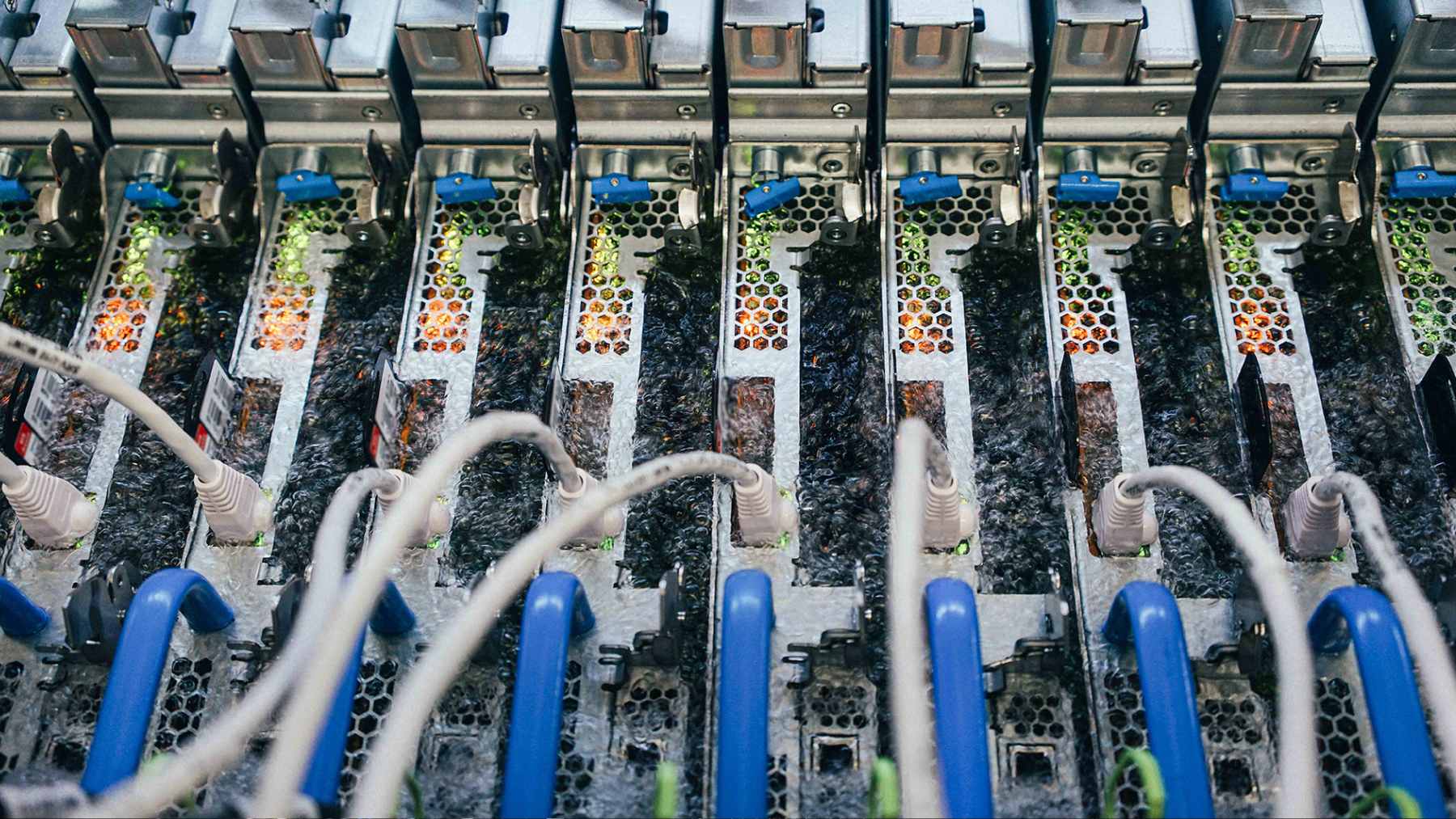

The other half of the climate story is cooling. Data centers increasingly compete for water in places where drought is becoming a new normal, and operators have to choose between water-heavy, evaporation-based cooling and more electricity-heavy mechanical systems.

Researchers at Lawrence Berkeley National Laboratory estimate U.S. data centers used about 176 terawatt-hours in 2023, and they warn that water use can spike during heat waves. That is exactly when grids are already under stress, which is why this topic keeps showing up in utility planning meetings, not just tech conferences.

This is why liquid cooling has become a major talking point for AI hardware. It can move heat away from dense chips more efficiently than air, but it introduces new materials, pumps, and maintenance requirements, so the real environmental win depends on cleaner electricity and careful water management.

Manufacturing and the U.S. footprint question

Nvidia framed the investments as a way to help Lumentum and Coherent build out U.S. manufacturing capabilities. Lumentum’s CEO said the company would invest in a new fabrication facility to increase capacity, and the deal includes future capacity access rights that matter when supply chains get tight.

On paper, domestic manufacturing can reduce some shipping emissions and can improve oversight of environmental and labor standards. On the other hand, chip and photonics fabs are energy and chemical-intensive, and the local impact depends heavily on how the facility is powered and how waste is handled.

There is also the rebound effect to watch. If photonics makes AI infrastructure cheaper to run, demand can rise even faster, and that can widen the hardware footprint, which can land right on the electric bill. Recent research on digital infrastructure footprints warns that efficiency gains do not automatically translate into lower total emissions without parallel clean power buildout.

Military AI is another kind of data center problem

Nvidia’s investment story sits in the commercial world, but AI’s energy and environmental footprint does not stop there. The U.S. Department of Defense has been moving AI tools deeper into operations, and terms like “program of record” signal when a capability is no longer a pilot but something built to scale.

That matters for the climate because militaries run huge computing workloads, often in harsh environments where power is expensive and logistics are fragile. A more network-hungry, sensor-heavy force can mean more fuel for generators and more demand for resilient grids, even before you get to the emissions from operations.

It is also a reminder that “efficient AI” is not automatically “green AI.” The most energy-efficient model in a lab still has to run somewhere, and the decision to scale it can outweigh marginal technical gains.

What readers should keep in mind

Nvidia’s $4 billion photonics bet is a snapshot of where the AI industry thinks the next limit is. Faster interconnects can help reduce wasted energy and heat per unit of computation, which matters when every new rack pushes local infrastructure harder.

But the environmental scorecard will be written by what comes next, where new data centers are built, how clean the electricity is, how water is managed, and whether regulators require transparency. The trouble is, the clock is moving faster than the politics.

If you are watching this as a consumer, the practical question is not just whether AI gets smarter. It is whether the companies building it can keep your grid reliable and your region’s water supply stable, without turning efficiency gains into a bigger total footprint.

The press release was published on NVIDIA Newsroom.