Nvidia says it has restarted manufacturing of an AI chip variant built to comply with US export restrictions on China, a move CEO Jensen Huang discussed this week at the company’s GTC event in San Jose.

That may sound like another chapter in the US/China tech tug of war, but it also points to a quieter constraint that is getting harder to ignore. AI hardware is now tied to electricity demand, water use, and industrial emissions in ways that can affect everyone, from hyperscalers to households staring at the electric bill.

A restart built around export licenses

Huang said Nvidia had paused production of its H200-based chip last year amid regulatory hurdles, then resumed after receiving US government export licenses and customer orders. He summed it up with a simple line, “Our supply chain is getting fired up.” (reuters.com)

The company has been clear that this China-focused business is not what underpins its bigger growth story. Reuters reported that Nvidia’s forecast of more than $1 trillion in revenue by the end of 2027 centers on newer Blackwell and Rubin systems rather than this China-compliant track.

The AI boom meets the power grid

The environmental angle starts with the obvious question. Where does all that computing energy come from when data centers scale up in a hurry?

The International Energy Agency estimates data centers used around 415 terawatt hours of electricity in 2024, roughly 1.5% of global consumption, and projects that global data center electricity use could rise to around 945 terawatt hours by 2030 in its base case. The IEA also stresses there is real uncertainty here, which is exactly why grid planners are nervous.

In practical terms, that means the bottleneck is not always chips. The IEA estimates that unless grid and supply risks are addressed, around 20% of planned data center projects could face delays, and transformer and cable wait times have been rising in recent years.

Efficiency is now part of the narrative

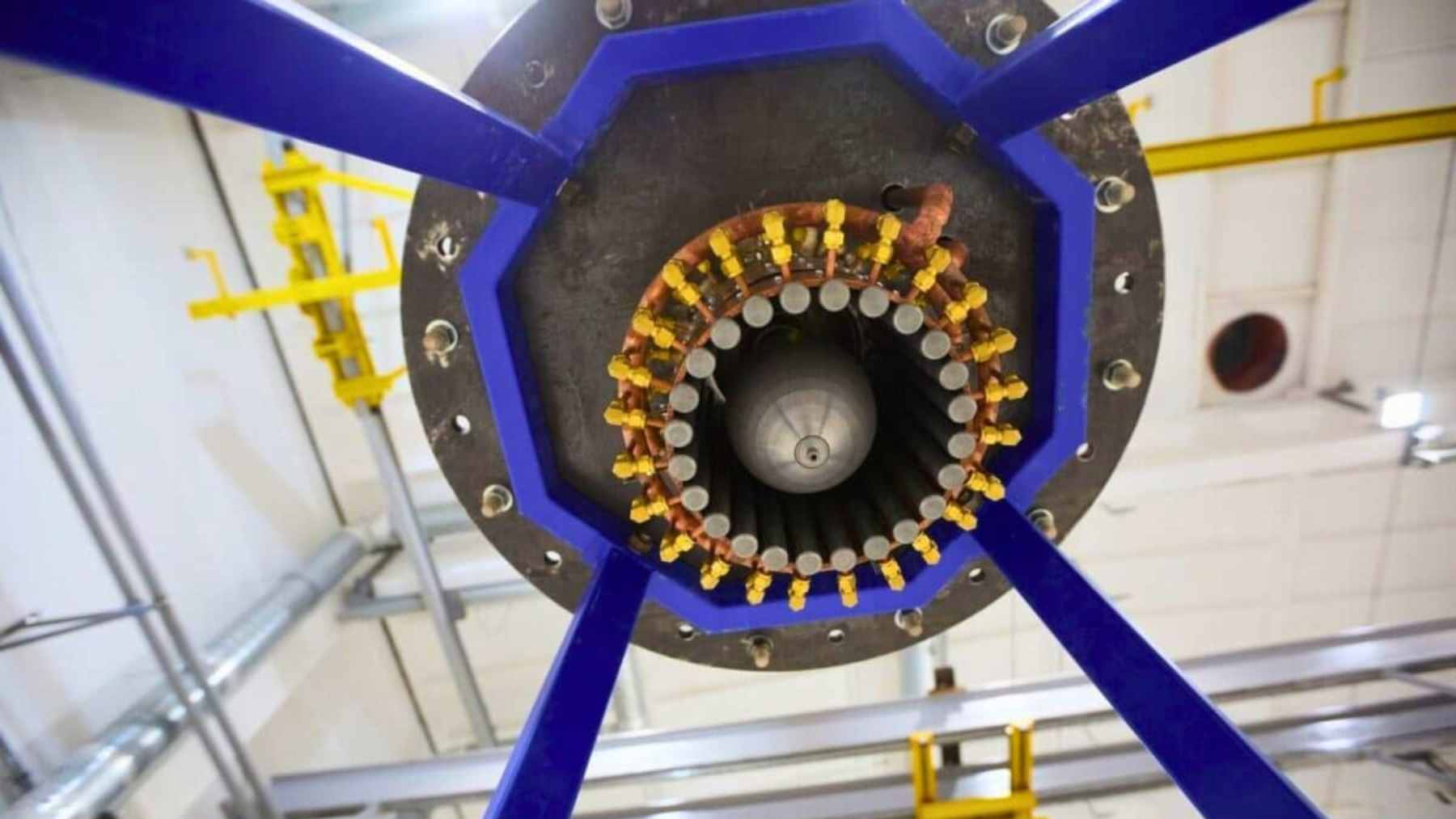

This is where Nvidia’s product roadmap and the climate conversation start to overlap. Newer chips are not just faster, they are marketed as more efficient, and that matters when AI workloads are running 24/7.

On its sustainable computing page, Nvidia argues that efficiency gains are becoming central to AI economics, including claims that Blackwell Ultra features can deliver major improvements in energy efficiency per token for inference workloads. Those are vendor claims, but the direction of travel is clear, and buyers are paying attention because power is now a top operating cost.

Still, efficiency can cut two ways. If computation becomes cheaper per task, companies may simply run more tasks, more often, in more places, and the total load can keep rising even as each run gets leaner.

Water and chemicals are the quiet constraint

Electricity gets the headlines, but water is the sleeper issue, especially as more regions deal with drought and tighter permitting. A February 2026 case study from the Taskforce on Nature-related Financial Disclosures warns that data centers and microchip manufacturing are both deeply dependent on reliable water supplies, sometimes in the same stressed basins.

That report cites wide ranges, but the numbers are still striking. It says a typical data center can use tens to hundreds of millions of liters of water per year depending on size, hyperscale facilities may exceed 2 billion liters (528 million gallons) annually, and a single semiconductor fab can use around 14 billion liters (3.7 million gallons) of ultrapure water per year.

It also notes that 45% of data centers globally are located in river basins at high risk of water availability disruptions.

Then there is the chemical footprint. The US Environmental Protection Agency notes that semiconductor manufacturing uses high global warming potential fluorinated compounds, which is why emissions tracking and abatement have been long running industry issues.

Defense logic keeps shaping the market

Export controls exist for a reason, and the defense framing is explicit in US rulemaking. The Federal Register language on updated advanced computing controls says the goal is to protect national security by restricting China’s ability to obtain critical technologies that could modernize military capabilities and support sensitive applications.

That is the policy pressure behind compliant variants and licensing decisions, including the kind of restart Nvidia is now describing. And as these rules evolve, the technical details matter, too, such as how US regulators adjust chip thresholds to limit workarounds.

The bigger takeaway is that the AI chip race is no longer just about who has the most silicon. It is also about who can secure enough clean power, enough water, and enough community trust to build and run the next generation of AI infrastructure without blowing up climate targets along the way.

The case study was published on ‘TNFD’.