If ChatGPT was the spark that got everyone talking, “agentic AI” is shaping up to be the appliance that stays plugged in all day. At Nvidia’s GTC conference in San Jose, CEO Jensen Huang pitched a near future where software agents can run your computer, handle errands, and complete multi-step work on your behalf.

That vision has a very physical footprint. “Always on” agents do not just change how we use software, they also change how much electricity data centers pull from the grid and how much water is needed to keep racks from overheating, and that is where the environment story gets real.

OpenClaw turns “agentic AI” into a mainstream product

OpenClaw is an open-source agent framework that fans describe as an assistant that can manage emails, deal with paperwork, and take actions across apps. Its creator, Peter Steinberger, joined OpenAI earlier this year, while the project moved into a foundation model that is meant to keep it open.

The adoption curve has been the headline. Reuters reported that OpenClaw surged past 100,000 stars on GitHub and drew about 2 million visitors in a single week, a sign that “agents” are moving from demos to downloads.

Nvidia wants to be the company that sells the picks and shovels for that gold rush. Huang argued that “every company in the world today needs to have an OpenClaw strategy,” while Nvidia announced NemoClaw, a stack meant to add privacy and security controls for enterprise use.

The agent era runs on electricity, not vibes

There is a simple reason data center operators are paying attention. Agents that take actions tend to run longer, and the industry’s shift toward more “inference” work means more computation cycles per user request, not fewer.

By the International Energy Agency’s estimate, data centers consumed about 415 terawatt hours of electricity in 2024, around 1.5% of global electricity use, after growing roughly 12% per year over the last five years. In its base case, the IEA projects data center electricity use could roughly double to around 945 terawatt hours by 2030.

That global share can sound abstract, right up until you picture the local substation down the road. The IEA also warns that data centers cluster in specific places, which can make grid integration harder even if the global percentage still looks “manageable” on paper.

Clean power contracts are getting larger, pricier, and more political

The clean energy market is already reacting to AI-driven load growth. Reuters reported that average North America solar PPA prices in Q4 2025 rose 9% year over year to $61.7 per megawatt hour, while wind PPAs rose 9% to $73.7 per megawatt hour, based on LevelTen Energy data.

Supply is part of the squeeze. Reuters also reported that policy changes accelerating the expiration of U.S. tax credits helped trigger a wave of project cancellations in 2025, including 266 gigawatts of capacity, with the vast majority tied to clean energy projects.

Meanwhile, demand is not waiting. The same Reuters report cited EPRI estimates that data centers could account for 9% of U.S. electricity demand by 2030, up from 4% in 2025, and noted that developers are planning tens of gigawatts of on-site power generation to secure reliable supply. That has real consequences for everyone else’s electric bill when peak demand hits on a sticky summer day.

Cooling is pulling water into the center of the conversation

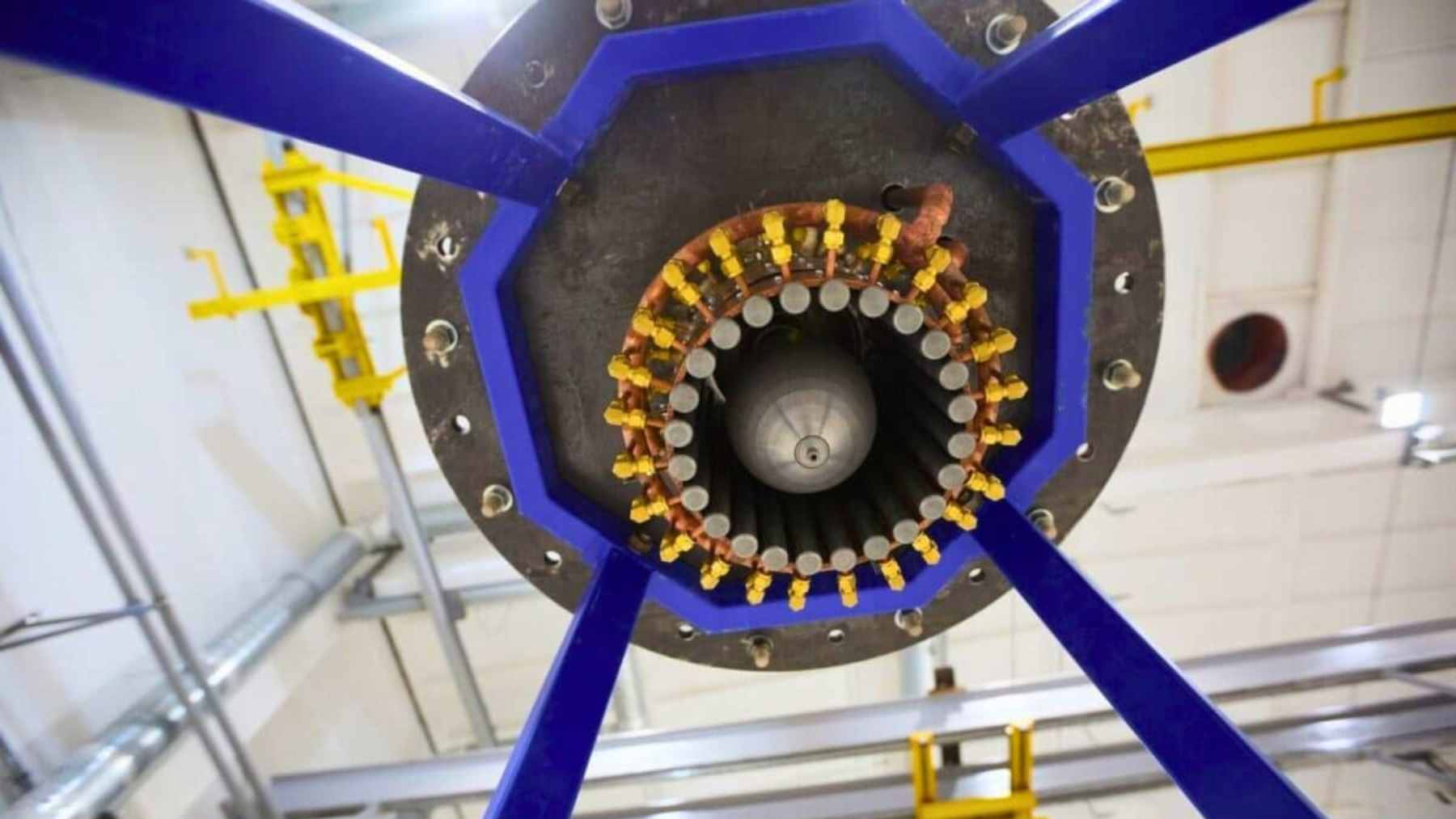

High-density AI racks are pushing the industry toward liquid cooling, which can be more efficient than moving massive volumes of air. That shift is not just a technical footnote, it is becoming a major business lane of its own.

On the water side, the numbers can be startling. The Environmental and Energy Study Institute notes that large data centers can consume up to 5 million gallons of water per day, with water use rising alongside energy use as facilities scale for AI workloads.

There is also a tradeoff that rarely fits into a keynote slide. MSCI points out that cooling can account for about 20% to 40% of a data center’s total energy use, and that water-based cooling may reduce electricity demand while increasing water consumption, while air-based approaches can do the opposite.

Security concerns are now part of the environmental story

When an AI agent can take actions on a system, it is not just a productivity tool, it is also a new attack surface. China’s industry ministry warned earlier this year that OpenClaw could pose significant security risks if misconfigured, potentially exposing users to cyberattacks and data breaches, though the warning stopped short of a ban.

In the U.S., NIST’s Center for AI Standards and Innovation has also zeroed in on the same risk category. In a January 2026 notice, NIST said agent systems capable of taking autonomous actions that impact real world systems can create unique security challenges, with potential implications for public safety and national security.

This is where tech, defense-minded risk planning, and climate goals start to overlap. The more society relies on automated agents to move money, manage infrastructure, and operate critical workflows, the more pressure there will be to prove those systems are secure, energy aware, and responsible about water use, not just fast.

The press release was published on “NVIDIA Investor Relations“.