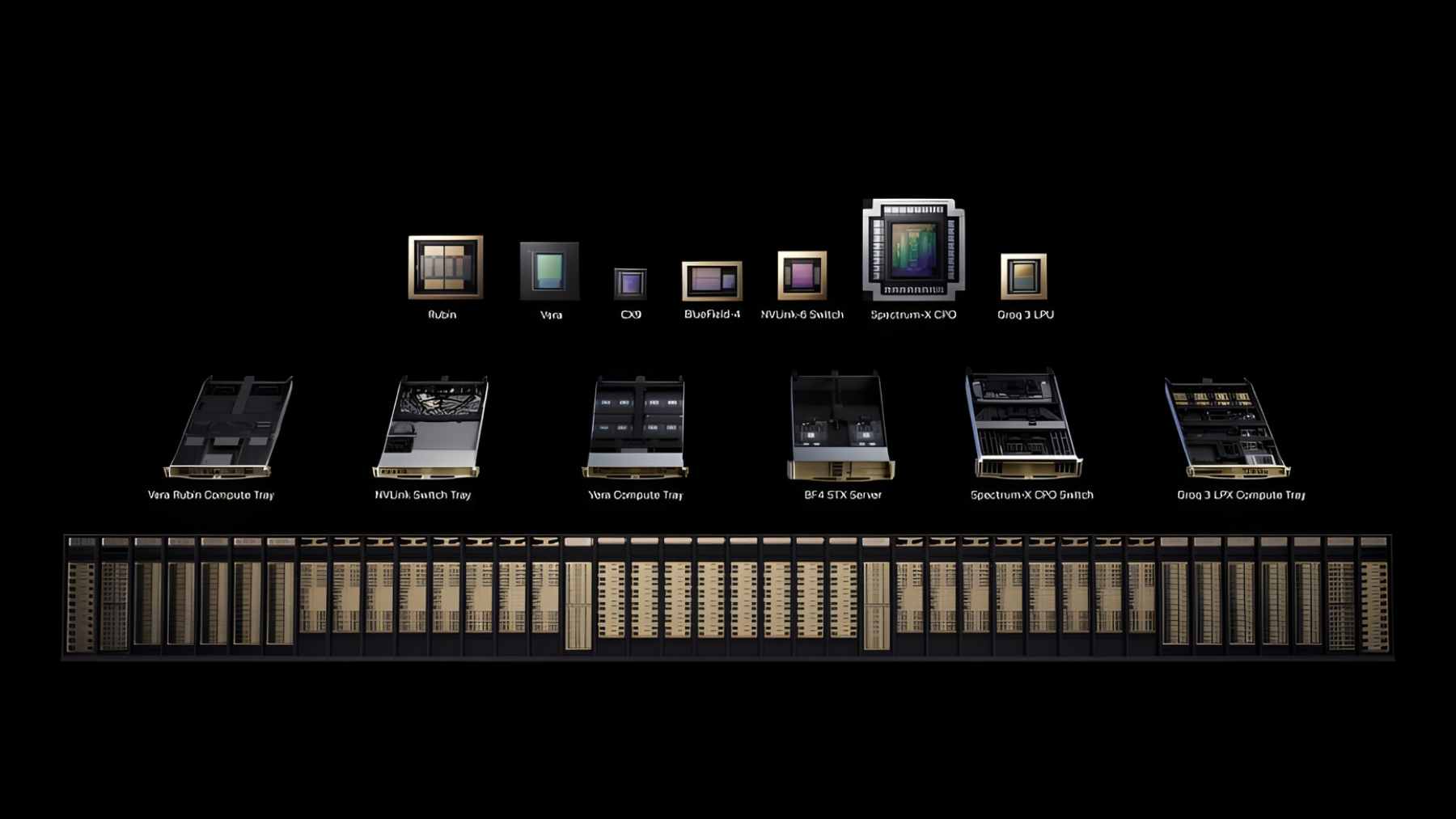

Amazon Web Services (AWS) and Nvidia used NVIDIA GTC 2026 to announce a deeper partnership, with AWS saying it will add more than 1 million Nvidia GPUs across its cloud regions starting in 2026, including Blackwell and Rubin architectures.

Reuters also reported on March 19, 2026 that Nvidia expects to sell 1 million GPUs to AWS by the end of 2027 as part of a broader package of chips and data center gear.

This is a business milestone, but also an ecology story in disguise. If the cloud is where AI lives, who powers it and who cools it? The International Energy Agency estimates data centers used about 415 terawatt-hours of electricity in 2024, and it projects consumption will more than double to around 945 TWh by 2030 as AI becomes a major driver.

What AWS is buying

Nvidia executive Ian Buck told Reuters the sales would begin in 2026 and extend through 2027. Reuters says the deal also includes Nvidia’s ConnectX and Spectrum-X networking gear inside AWS data centers, which matters because AWS has long leaned on custom networking hardware of its own.

The other big clue is where AWS wants to spend those GPUs. Buck said AWS plans to use Nvidia’s “Groq” chips along with six other Nvidia chips for more efficient inference, and he summed it up when he said, “Inference is hard. It’s wickedly hard.”

Inference is the ‘always on’ workload

Training a frontier model is the splashy part, but inference is the daily grind. Every time someone asks an AI assistant for a summary, a route, or a shopping recommendation, servers have to generate the next token and then the next one.

That is why performance per watt is now a competitive metric, not just a sustainability slogan. Nvidia says its Blackwell GPUs are generally more than 50 times more energy efficient than “traditional CPUs” for large language model inference workloads, and it says its DPUs can reduce power consumption by offloading data center infrastructure functions from CPUs.

Even with better chips, the scale is enormous. The IEA says data centers have grown electricity use around 12% per year since 2017, and it estimates a typical AI-focused data center can consume as much electricity as 100,000 households, while the largest sites under construction are designed to draw about 20 times that.

Electricity is local, and bottlenecks show up fast

A single hyperscale campus can change a regional grid in ways a national statistic never captures. The IEA warns that, unless risks are addressed, around 20% of planned data center projects could face delays because of grid constraints, long connection queues, and slow buildouts of key equipment.

Amazon points to clean energy as part of its answer. The company says it matched 100% of the electricity consumed across its global operations, including data centers, with renewable energy in 2023, years ahead of its original timeline.

Even so, local grid upgrades are the kind of slow-moving project people notice when the electric bill climbs.

Water and heat are moving up the agenda

Cooling is where climate stress can feel personal, especially in that sticky summer heat when everyone is relying on air conditioning at once. AWS says it aims to be “water positive” by 2030, meaning it plans to return more water to communities and the environment than it uses in its direct data center operations, and it says it was 53% toward that goal in 2024.

There is also growing scrutiny over how water impacts are counted. One recent investigation argued that water tied to electricity generation can be left out of public reporting.

Defense demand sits inside the same infrastructure

The cloud is not just for startups shipping the next app update. The U.S. Department of Defense says its Joint Warfighting Cloud Capability contracts went to AWS, Google, Microsoft, and Oracle to provide cloud services “at all classification levels” and “to the tactical edge.”

From an environmental standpoint, defense workloads add another twist. They often come with strict resilience requirements, which can mean more redundancy and more always-on capacity, even when the hardware is improving.

The question hanging over the AI buildout

For the most part, the near-term issue is not whether AI is “good” or “bad.” It is whether the physical systems underneath it can scale without locking communities into higher emissions and tighter water stress.

That is why the 1 million GPU headline matters–it is a sign that the next constraint may be power, water, and permitting rather than silicon. In practical terms, companies that can prove lower energy per query, credible clean power, and realistic water plans will likely have an edge.

The official statement was published on AWS Machine Learning Blog.