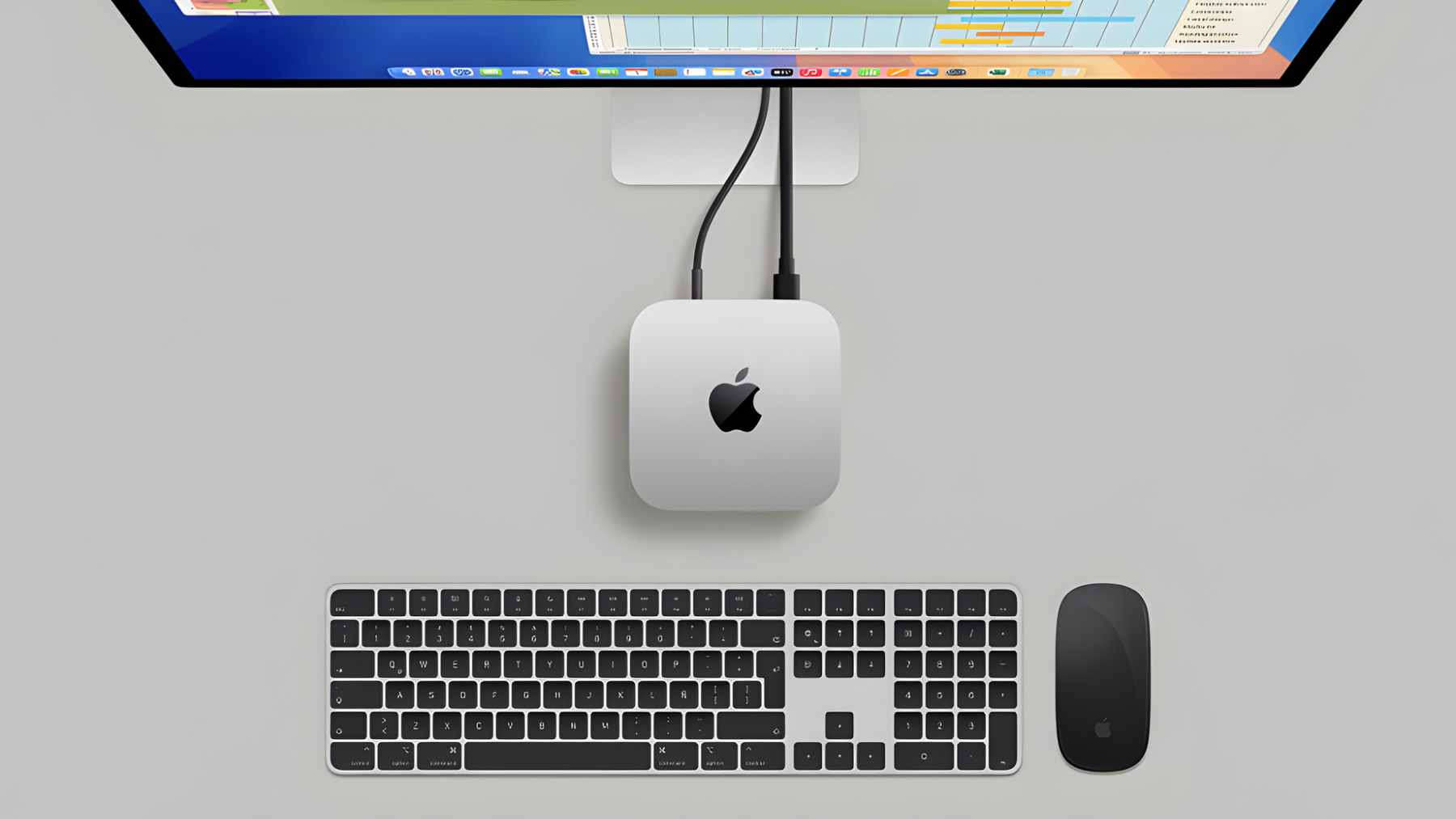

If you have ever looked at a Mac mini and wished it could run heavyweight AI without renting a cloud server, something just changed. Tiny Corp says Apple has approved its TinyGPU driver, which lets external AMD and Nvidia GPUs connect over Thunderbolt or USB4 and act as AI accelerators on Apple Silicon Macs.

In plain terms, a modest little desktop can now handle local AI work that used to demand a far pricier setup.

The timing is not random. Apple has discontinued the Mac Pro, its last truly modular desktop, leaving many pros with fewer ways to scale up on macOS using official hardware.

At the same time, the International Energy Agency says data centers consumed about 415 TWh of electricity in 2024 and could rise to around 945 TWh by 2030 in its base case, which keeps the AI power debate front and center.

TinyGPU makes eGPUs useful on Macs again

TinyGPU is not the old eGPU story where you plug in a box and suddenly your games run faster on an external monitor. Reports and documentation describe a narrower focus where the external card is meant for AI compute rather than traditional graphics acceleration. That distinction matters because it sets expectations right away.

The requirements are specific, but clear. TinyGPU targets macOS 12.1 and later, uses a USB4 or Thunderbolt connection, and supports AMD RDNA3 and newer GPUs plus Nvidia Ampere and newer GPUs. The docs also note that Nvidia users may rely on Docker Desktop for parts of the workflow.

Tiny Corp has framed Apple’s approval as the practical breakthrough because it avoids the reputation of “hack it until it works” setups. Several reports say the point is to enable installation without bypassing System Integrity Protection, which has been a long standing hurdle for advanced users on Apple Silicon.

AI compute now comes with a power bill question

Step back for a second and look at the bigger trend line. The IEA estimates data centers used around 415 TWh of electricity in 2024, about 1.5% of global electricity consumption, and projects global data center electricity demand could roughly double to about 945 TWh by 2030 in its base case.

That growth is driven by many workloads, but AI is one of the main reasons investment is accelerating.

So is running a model on your desk greener than calling an API. Sometimes, but not by default. Local inference can reduce repeated network transfers and keep certain jobs off shared cloud GPUs, but an external graphics card can also draw a lot of power and dump heat into your room, which you will notice when the fans ramp up and the electric bill lands.

In practical terms, the environmental upside depends on what you replace. If TinyGPU helps a team stop sending every experiment to the cloud, that can shift demand away from always on remote infrastructure. But if it mostly nudges people into buying new high end GPUs for casual tinkering, the “green” story gets complicated fast.

A potential win against e waste

E waste keeps piling up, whether we talk about AI or not. The Global E waste Monitor reports 62 million tonnes of e waste generated worldwide in 2022, and only 22.3% was documented as formally collected and recycled. The rest is a messy mix of storage, informal processing, and disposal that can spill pollution into communities.

This is where the Mac mini angle gets interesting, and it is not just marketing. In Apple’s own Product Environmental Report for Mac mini, the company says the device contains more than 50% recycled content by weight and that 100% of manufacturing electricity is sourced from renewable energy.

Apple also publishes per configuration carbon footprint estimates, including a Mac mini with M4 listed at 32 kg CO2e before carbon credits are applied.

None of that erases the footprint of a new GPU, of course. Still, life cycle summaries often show that production is the biggest chunk of a personal computer’s carbon footprint, which is why keeping a working computer in service longer can matter.

If eGPU style modularity returns as an option for AI, fewer users may feel forced into full system replacements just to run modern models.

Why business and defense care about edge AI

For businesses, the pitch is straightforward and it is not only about speed. Running AI locally can keep sensitive data on premises and reduce per query cloud spending, while letting organizations reuse existing Macs as compact inference boxes. If you are a small company watching both budgets and sustainability targets, that kind of flexibility is hard to ignore.

Defense planners have similar motivations, with higher stakes and stricter constraints. CSIS notes that edge AI processes data locally, which can reduce latency and limit exposure of raw data across contested networks. But once compute moves closer to the field, energy and power logistics become a constraint, not an afterthought.

That concern is showing up in the policy conversation. Carnegie has argued the Pentagon should acknowledge energy use as a core issue in its AI and cloud efforts and prioritize efficiency metrics, while NATO has highlighted climate impacts and energy security as risks to infrastructure and operations.

When you zoom out, the tech story and the climate story are increasingly the same story.

What to watch before you plug one in

TinyGPU does not magically turn every Mac into a fully modular workstation. Reporting and documentation stress that the setup is aimed at AI computation, not display acceleration, and the data link over USB4 or Thunderbolt can be a real bottleneck for certain workloads.

That means performance can vary a lot depending on the model, the batch size, and the kind of AI task you are running.

It is also worth thinking about what you attach and why. Reusing an existing GPU, choosing a more efficient card when you do upgrade, and scheduling heavy runs when your local grid is cleaner can all reduce the footprint. It also helps keep the home office experience tolerable, because nobody enjoys a space heater effect in April.

At the end of the day, Apple’s approval of a niche driver is a reminder that “green AI” is not only a data center debate. Sometimes it is about making small computers last longer, and being honest about the power they consume when you push them hard.